- 13 Nov 2023

- 4 Minutes to read

- Print

- DarkLight

- PDF

Protecting Oracle Databases

- Updated on 13 Nov 2023

- 4 Minutes to read

- Print

- DarkLight

- PDF

Resources

Overview

Oracle provides an import and export feature in its recent versions called Datapump. Datapump consists of four components:

- expdp on the command line: This tool exports the database or tables of the database from a running Oracle instance to a flat file.

- impdp on the command line: This tool imports a flat file of the database or tables of the database into a running Oracle instance.

- DBMS_DATAPUMP PL/SQL package (Data Pump API): The command-line client expdp and impdp use the procedures provided in the DBMS_DATAPUMP PL/SQL package to execute export and import commands using the parameters entered at the command line. These parameters enable the exporting and importing of data and metadata for a complete database or for subsets of a database.

- DBMS_METADATAPL/SQL package (metadata API): The command-line client expdp and impdp use the procedures provided in the DBMS_METADATA PL/SQL package, which provides a centralized facility for the extraction, manipulation, and re–creation of metadata.

For further documentation, see Oracle’s Help Center.

Export

Below is a step-by-step guide for exporting your Oracle instance on Windows. You can follow analagous steps on other platforms.

On Windows, create the export directory. For example, create "D:\Data Pump". You can create it anywhere on your system or on your network where the expdp tool can access. Note that this step must be performed by the privileged user and that the directory must be created on the server.

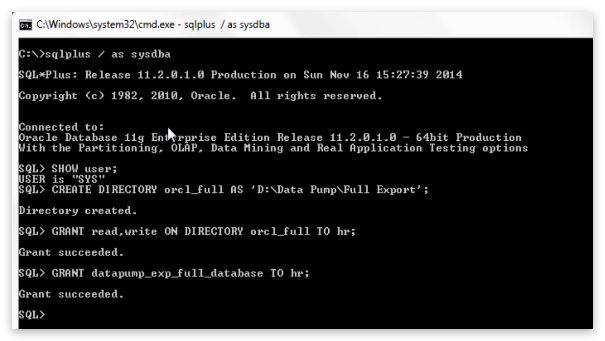

On the Oracle instance, create a Directory Object and grant it mandatory privileges.

SQL> CREATE DIRECTORY orcl_full AS ‘D:\Data Pump\full export’;

SQL> GRANT read, write ON DIRECTORY orcl_full TO hr;

SQL> GRANT DATAPUMP_EXP_FULL_DATABASE TO hr;

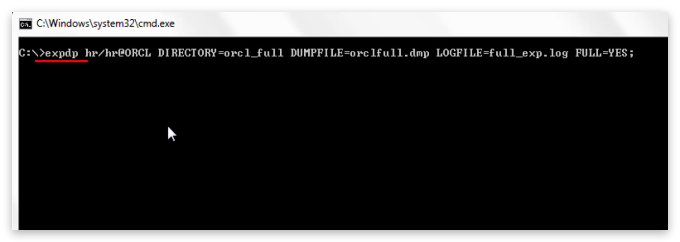

On Windows, open Command Prompt and run the expdp command.

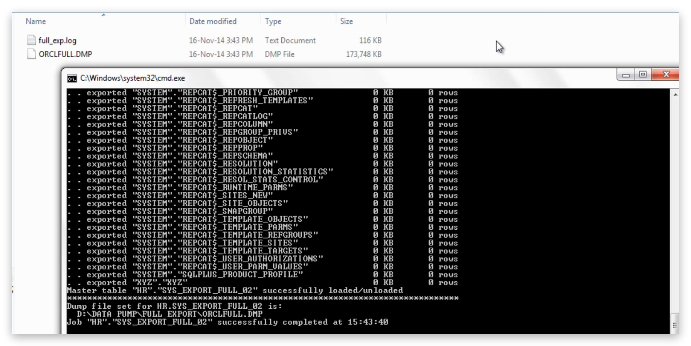

C:\>expdp hr/hr@ORCL DIRECTORY=orcl_full DUMPFILE=orclfull.dmp LOGFILE=full_exp.log FULL=YES;

Parameters used in above EXPDP command:

- User Login: The first parameter is a user login. Here you provide the login information of that user using which you want to perform the export (e.g. hr/hr@ORCL).

- DIRECTORY: Using DIRECTORY parameter you specify the default location to which Export can write the dump file set and the log file. Here in my case DIRECTORY parameter is set on the directory object orcl_full which we just created.

- DUMPFILE: Using DUMPFILE parameter you specify the names, and optionally, the directory objects of dump files for an export job. The dump file is made up of one or more disk files that contain table data, database object’s metadata and control information. These files are written in binary format and dump files can be imported only by data pump impdp import utility. As these files are written in binary format by server using expdp export utility thus they should not be tampered. Changing information in these files might compromise the data consistency which may cause an error while importing. You can supply multiple file names here separated by comma. Dot dmp is the default extension for dump file. If no extension is given then expdp export utility will use this default extension. Though you can specify whatever extension you want but it’s always advisable to use the oracle recommended extensions.

- Log file: Using log file parameter expdp export utility will generate a human readable log file for you which are very helpful in tracking the status of your export.

- FULL: This parameter full indicates that you want to perform a full database export. This parameter can have YES or NO values. If you set this parameter to YES that means expdp utility will export all the data and metadata of the database.

The resulting files should appear in Windows Explorer under the specified folder.

Import

- On Windows, open Command Prompt and run the expdp command.

C:\> impdp hr/hr@ORCL DIRECTORY=demo DUMPFILE=table1.dmp LOGFILE=sh_imp.log TABLES=table1;

Let’s have an in-depth look at the above impdp command and its parameters.

- Impdp: Command starts with impdp which is a data pump utility for loading an export dumpfile set into a target system.

- Hr/hr@ORCL: Followed by impdp command we have the login information of the user who wants to perform the import. Though specifying the SID (@ORCL) of your database is not mandatory yet if your system or server has multiple databases running in the background then it’s a good practice to avoid any conflict. You need not be a sysdba to perform export or import however you require some system privileges.

- DIRECTORY: Using directory parameter you can specify the default location in which the import job can find the dumpfile set and where it should create the log files. The value of directory parameter is always a directory object. Directory object is a name mapped over a directory path. Or you can say that it’s a pointer pointing over a directory where impdp utility can find the dumpfiles created by expdp data pump export.

- DUMPFILE: Using DUMPFILE parameter you can specify those dumpfiles which contains the tables which you want to import. If there are more than one dumpfiles then you can specify their names separated by commas.

- LOGFILE: Although LOGFILE parameter is optional yet it’s highly recommended to use it. Using this parameter you can generate and name a log-file which is written in human readable language and contain all the information regarding your import job. If there is an error using this file you can easily track them.

- TABLES: First using this parameter you specify that you are performing table-mode export. Second using this parameter you can specify the name of the tables which you want to import.

this parameter you can specify the name of the tables which you want to import.

Last Update: March 29, 2017